The term ‘Digital Twin’ has recently become popular in BIM & Digital Engineering circles.

The idea is that if you are the owner or operator of an asset, such as a building or railway system, you have a digital version of the physical asset which can be used for operational purposes, analysis or future development.

Tie this in with ‘internet of things’ technology to receive data from sensors or interfaces with systems such as security, ventilation or building management means that you have a living, breathing digital model.

It is a very exciting and compelling concept.

The challenge: data quantity vs quality

It is easy to think that since most design and construction is carried out digitally these days, the process would be quite simple. Press a button at the end of the project to turn a design model into a digital twin- job done.

However, the challenges are:

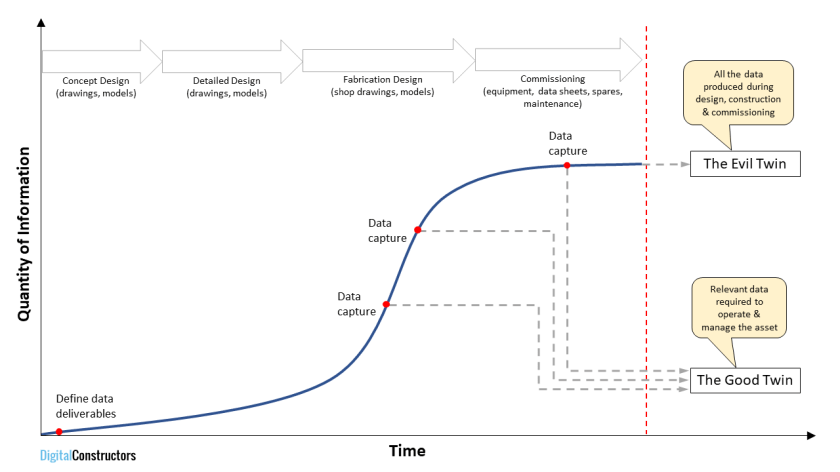

- The amount of data generated during the design and construction phase is absolutely enormous

- For most operational purposes, only a small subset of this data is required

- Data in the digital twin needs to be trustworthy and to be actively maintained

In other words, a good digital twin should contain relevant information that is kept up to date . Much of the data which was required to build the asset is no longer relevant beyond construction completion.

As I’ve explained elsewhere on this blog, a common misconception is that the more data you have, the better. The difficulty in producing a digital twin is in managing the balance between the quantity and quality (or value) of certain data.

Too much information

As an analogy- a car has just a handbook which describes what the tyre pressures should be and what kind of oil to use. The instruments display key information such as the car speed, temperature and engine RPM. You aren’t carting around the workshop manual and all the detailed engineering models or drawings that went into the car production in the first place. It is simply too much information.

The owner or operator needs to know key information about the asset and the data needs to be up to date and to be easily accessed. Most asset managers are not BIM experts.

The Evil Digital Twin

“Grady Twins”by jrmyst is licensed under CC BY-NC 2.0

“Grady Twins”by jrmyst is licensed under CC BY-NC 2.0

The concept of a good twin/bad twin is found in various literature or film- such as Beowulf or the doppelgänger, where two apparently identical things are quite different. The blue frilly frocks of the Grady Sisters in Steven King/Stanley Kubrick’s ‘The Shining‘ are still terrifying to me. The good Spock/bad Spock of Star Trek was a little less serious (bad Spock had facial hair)

I’m going to apply this notion to the digital twin. The digital twin turns bad if:

- it contains overwhelming amounts of superfluous information

This occurs when data from the design and construction phase is taken without any filters being applied. The valuable information in the digital twin is now obscured by irrelevant information - the effort in maintaining the data outweighs the value of having it

To have any value, the digital twin needs to be kept up to date beyond construction completion. For most organisations, it is not feasible to employ architects and engineers to use the same applications that were used to author models in the first place. Furthermore, if part of the digital twin becomes outdated, then trust in the whole digital twin is lost. - the system used to access the digital twin is inappropriate

Similar to the above bullet point, there is little point in having a digital twin if the information cannot be readily accessed. For example, using the original authoring tools might take 10+ minutes to open a complex model and even longer to locate the required information

Therefore, the bad version of the digital twin can have a significant initial cost as well as minimal value, whereas the good twin can be a significant asset.

I’ve encountered the bad twin on projects where there is a contract requirement for ‘all data’ and it is assumed that relevant information can simply be extracted at the project completion. This is like unscrambling the egg i.e impossible retrospectively.

It needs to be considered and addressed progressively throughout the project.

How to get the good twin

For a digital twin to be of value, a targeted and focussed approach is necessary. Rather than an approach of ‘more is better’ to digital asset data, a thorough and discerning examination is necessary of where the digital twin can provide a benefit.

I would challenge the assumption that a digital twin is the culmination of an ever-increasing generation of data. I would argue that the good twin should contain carefully selected data chosen for its relevance and value to the present and future operation of the asset.

Recommendations

For clients:

- Define upfront what information is valuable and useful in a ‘digital twin’. Don’t assume you need all data from your designers and contractors

- Avoid blanket contract requirements for ‘all data’- it comes at a cost

- Consider who will use each piece of information in the digital twin and the benefit that it has to your organisation

- Consider how the digital twin will be maintained and the resources, skills and systems required

- Focus on areas of highest value- for example this could be fire safety, energy efficiency, essential services or rental utilisation

- Don’t get bogged down in technical details (such as Uniclass schemas, IFC or COBie attributes) too early. Treat it as a business decision first & foremost and develop an appropriate strategy

For designers and contractors:

- The best approach for your business is to provide something of value to your client (not necessarily what they ask for). Work collaboratively with your client to identify which data is and is not required, and focus on the highest value areas.

- Do not think the easy way out is just to give the client everything

- Address this issue as early as possible and do not put it into the ‘worry about it later’ basket. In many cases, decisions and agreements at the outset of a project will significantly influence the outcome, effort and cost. To be successful, the data that will comprise the digital twin needs to be developed progressively.

You must be logged in to post a comment.